SIGGRAPH, the world’s premiere computer graphics showcase, begins its live, in-person event this week in Vancouver, where many leading scientists and tech creators will be sharing their latest work publicly for the very first time. Here’s a rundown of some of the most exciting new tech that has been introduced so far, from our perspective:

Feature Film Quality Markerless Mocap

Traditional motion capture processes normally require expensive mocap suits and specialized cameras. Move.ai, a recently launched startup out of London in partnership with Nvidia, has developed an AI-powered system allowing for high-fidelity mocap that can be created from regular video footage. Unlike other markerless solutions, Move’s system has advanced pose estimation tools that can account for difficult-to-observe joints such as those in the spine. The demo presented at SIGGRAPH included mocap created from soccer game footage with many players on the field all being fully motion captured, which could potentially be used for viewing professional sports events via VR, as well as a lower-end iPhone mocap software aimed at indie creators.

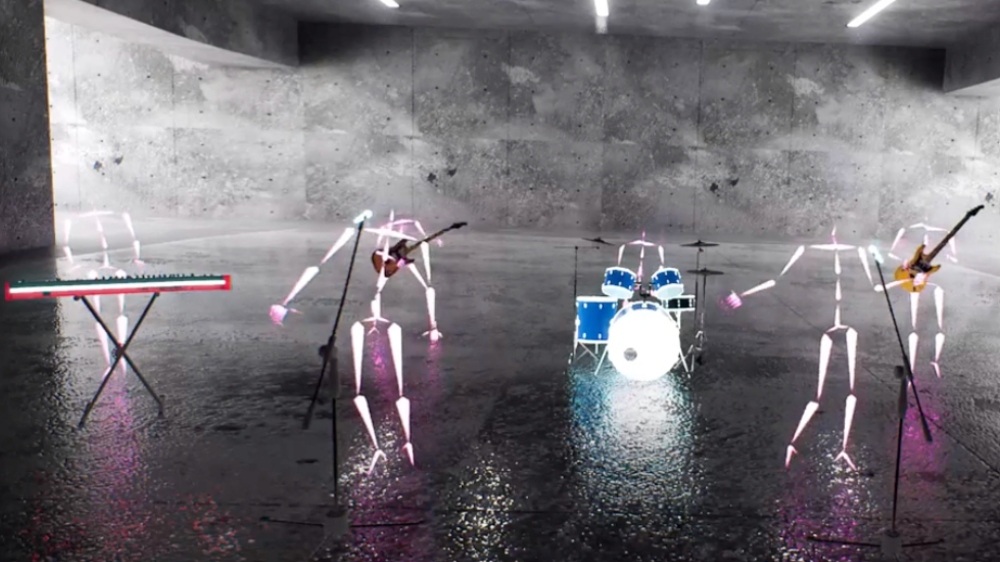

Volumetric Video Capture For Games, Interactive and Live Events

Led by former employees of Google and Pixar, Arcturus has created a system for volumetric video capture and post-processing with individual products HoloSuite, HoloEdit, and HoloStream. Their system allows filmmakers to capture a performer’s entire body from 360 degrees so that it can be inserted into a 3D environment in games or other interactive entertainment — think the Tupac hologram at Coachella.

Arcturus is collaborating on projects with Dell and HP, and their tech is currently in use for production at Vancouver’s newest virtual production stage, Departure Lounge.

Voice Activated Camera Gimbals

Created by researchers at University College London, LookOut is a camera control system designed using consumer-grade video gear, combined with custom software that allows operators to direct a gimbal-mounted camera using voice commands.

Users can define a personalized range of actions for the gimbal to perform, such as identifying, tracking, and re-framing specific actors in a shot, by using an app that can be programmed through a simple visual interface (as opposed to code).

The software uses advanced computer vision algorithms to identify people or objects in front of the camera similar to the system behind Tesla’s self-driving cars. It also has the ability to transition seamlessly through different modes of performance — a shot could start out as a super-smooth Aaron Sorkin-style walk-and-talk before transforming into a Paul Greengrass-type shakycam mode.

While the system currently exists as a proof-of-concept, it is easy to imagine this technology incorporated into camera gimbals for consumer and professional markets alike.

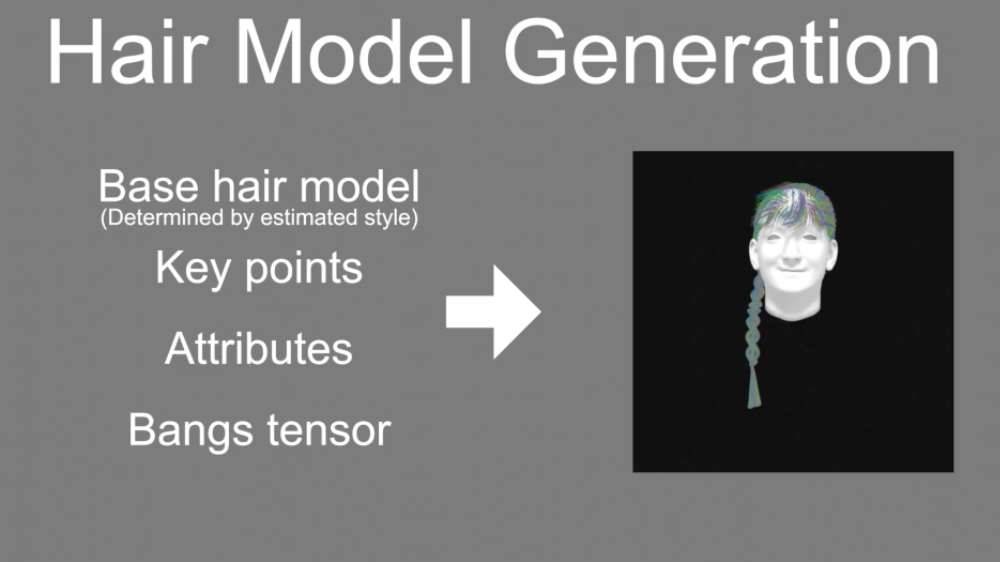

Image Generated CG Hair

Photoreal CG hair is one of the most complicated tasks to pull off, often requiring a team of dedicated specialists. The task of grooming physically plausible hairstyles is generally very time-consuming, but a group of researchers at Kyushu University in Japan has developed a novel technique for generating CG recreations of hairstyles requiring only a single image. Their method builds upon the earlier creation of a technology called Hair-GAN (Generative Adversarial Network, a Deep Learning concept), which was able to create more rudimentary digital estimates of hair structure.