It seemed like a good idea to sign off our NAB 2012 coverage with a mention of the big splash, a major new release, something exciting – but although the exhibition seemed refreshingly, almost comfortingly replete with new stuff, it’s actually quite hard to pick out a shining example.

It seemed like a good idea to sign off our NAB 2012 coverage with a mention of the big splash, a major new release, something exciting – but although the exhibition seemed refreshingly, almost comfortingly replete with new stuff, it’s actually quite hard to pick out a shining example.

There have been a lot of interesting new cameras mentioned, at various stages of readiness. To recap cameras, looking far ahead, Panasonic’s 4K Varicam is just about visible on the horizon. Perhaps closer is Sony’s 4K high-speed device. Blackmagic’s camera is big news. But none of them is really a game changer.

Likewise, the march of 3D continues much as anyone with any experience would have expected it to. The Fraunhofer Institute in particular had a lot of interesting new things, both hardware and software. Ultimately, though, the key problems are not yet solved, or even really talked about.

So NAB 2012 was, if anything, a place where technology marched on in a large number of small ways. That’s good, considering how limited recent years have felt, presumably as a result of wider monetary gloom, and I’m beginning to believe reports in the general press that things are starting to turn around. The purpose of this article, then, is to elucidate some of the more interesting small ways in which technology marched on, in such a way that I don’t have to even try and make a coherent narrative out of it. Cute, eh?

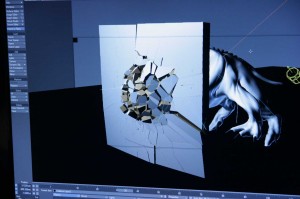

Remaining in the world of people who spend all day in dark rooms surrounded by glowing monitors, we find Maxon and NewTek hawking new features for Cinema 4D and Lightwave, respectively. Lightwave is half the price and enjoyed a full version bump at the show, as well as the ability to do Voronoi shattering of objects, and then apply rigid-body physics to those shattered parts. What’s particularly nice about the NewTek approach to this is that all of the shattered parts remain part of the original mesh, a significant cleanup of competing approaches which broke the object down into completely separate meshes, which is vastly more time-consuming.

There are complexities to this. Antialiasing doesn’t make sense, neither do depth fog or transparent objects, and it is therefore not a perfect solution. However, it’s still extremely useful in conjunction with something like Nuke, and can be used – for a trivial example – to do a layer of fog rising above the floor. It’s not a new feature for 3D graphics – Maya does it – but it’s a nice new feature for the motion graphics artist’s favorite 3D program.

While we’re down here in the postproduction basement, we should talk about an undeniably big-deal at NAB 2012 – Smoke for Mac. My initial attitude was less cynical, on the basis that I’ve encountered companies that considered themselves “high-end” before and I’ve also watched as technological progress overwhelmed their claims of godhood, so it’s good to see an outfit like Autodesk starting to recognize that the old guard high-end stuff is no longer going to be defined by the underlying hardware, but rather by capability, workflow, UI and ease-of-use. I can’t say that a half-hour chat with Autodesk helped reinforce this positive initial attitude. They’re still maintaining the we-don’t-talk-about-prices linux version, although I do appreciate their dilemma with regard to UI changes risking alienation of their existing user base. This is by no means a trivial problem or one that’s limited to postproduction or indeed software in general. Both Avid (who ultimately took the plunge and reworked their UI) and the ProTools software (which didn’t) either eventually started to suffer from long-term UI rot or still do, and it is a particularly sensitive issue in areas where users are, or at least claim to be, much more artists than they are computer people.

Still, Smoke for Mac has a rather thoroughly reworked UI and is at least some evidence that outfits like Autodesk are capable of reading the writing on the wall when it comes to the massive explosion of processing power available on desktop workstation, particularly with regard to GPU processing, which gives the average Mac or PC many times the silicon horsepower of a Smoke workstation of only a few years ago. There is a concern here that the Mac version won’t necessarily be a training ground for the Linux version due to those UI variances, but Autodesk don’t seem overly worried about this. That attitude does raise the concern that they’re trying to have their cake and eat it too with regard to maintenance of the old, traditional user base while still grabbing a chunk of the new, desktop computer market.

Based on what happened to Da Vinci when they tried to at least partially ignore GPU computing… good luck with that, Autodesk. Their refreshing willingness to accept the rise of desktop computers aside, I predict the imminent end of the Linux version. Still, Smoke – or at least some kind of Smoke – for $3,495? On one hand, nice. On the other hand, that’s more money than the entire Adobe creative suite.

So we’ve shot, cut, graded and output our masterwork. One problematic area, which has lain fallow for several years, is archiving. There have been a few frankly harebrained schemes concerning the photographing of 2D barcodes onto 35mm film stock, but it seems like a bit of a stretch to suspect that this will ever become a mainstream technique. It is, after all, only feasible because the volume processing of 35mm filmstock for other purposes keeps prices sane – or at least somewhat sane, assuming you consider the price of any photochemical filmmaking product or service sane. LTO tape has a recent history of healthy growth in capability and a promising roadmap into really serious capacity and speed options soon. This is certainly not the case because of the film and television industry’s interest in it; tape has for decades been the favorite secure archive option of financial institutions and other high-value businesses, and it’s as well to remember that we’re really riding on their coat-tails in this regard.

Anyway, here are those numbers: up to 1.2TB per cart, depending on whether the discs inside are single or double-sided, single or double-layer. This compares reasonably with LTO5, which offers 1.5TB per tape. Sony claim 200Mbps write, 300Mbps read, which is significantly slower than LTO5, which offers 140MBps (1120Mbps). The target price per gigabyte is 10 US cents, whereas some online sources claim 6 cents for LTO5.

So it’s smaller, slower, and more expensive. But there are upsides; these numbers will doubtless change as and when the thing gets any market penetration. It has an actual filesystem on it, meaning it can be used for arbitrary data storage, just like any other USB device – and the drives are USB3 connected (although this is really only barely relevant at 300Mbps). LTO, by comparison, has no filesystem as an intrinsic part of its specification. Third-party devices add this, but they’re usually network-attached and not as convenient as USB storage.

So it’s smaller, slower, and more expensive. But there are upsides; these numbers will doubtless change as and when the thing gets any market penetration. It has an actual filesystem on it, meaning it can be used for arbitrary data storage, just like any other USB device – and the drives are USB3 connected (although this is really only barely relevant at 300Mbps). LTO, by comparison, has no filesystem as an intrinsic part of its specification. Third-party devices add this, but they’re usually network-attached and not as convenient as USB storage.

I still fear for the ability of the Sony optical disc archive to compete with LTO, which has a hell of a lot of traction in this particular niche industry. I don’t think we’ll see too many big shoots backing up uncompressed HD recording to it, anyway. It might be a pretty good fit, though, for people who are shooting what we might call smaller flash-based HD formats and need somewhere to dump them, particularly people who aren’t subject to extremely stringent insurance and completion-guarantee requirements. I suspect success may depend quite strongly on the price of the drives. LTO drives are notoriously expensive, especially those sold as part of a network-attached storage system with an FTP server and intelligence to provide a virtual filesystem. The Sony drives need to include a not-insignificant amount of robotics to deal with the disc handling, though, so it’s hard to predict which way this will go. No prices were available at the show.

Now I’ve run out of space and notes, and what I’d like to describe as a train of thought is meandering to a close – if a list of largely unrelated developments really counts as a train of thought. I suppose this article is, appropriately, a bit like NAB was this year; a collection of individually cool stuff, steady progress and interesting development but no particular quantum leap in any area.