A new wave of filmmaking is entering Hollywood by way of virtual and remote production. Disney’s The Mandalorian exposed wider audiences to virtual filming aesthetics by pulling back the technological curtain through a series of popular behind-the-scenes videos.

Educators at USC’s Entertainment Technology Center (ETC) introduced their recent graduates to this new game engine technology by transforming two sound stages into a testing ground for learning virtual previsualization, production design, rendering live digital environments during filming, and the ability to collaborate from anywhere in the world via Teradek streaming devices.

Teaching the Reality of Virtual Filmmaking

The R&D vehicle chosen for this lesson: The Ripple Effect, a sci-fi short film about the human race’s last hope for survival. Co-writers Hannah Bang and Margo Sawaya would be given the chance direct under the guidance of industry-experienced ETC faculty members Greg Ciaccio (ASC Associate Member & Workflow Chair of the Motion Imaging Technology Council) and Kathryn Brillhart (VES Global Board of Directors & ASC MITC Virtual Production Committee member)

ETC partnered with Global Trend Pro and Lux Machina to utilize their LED-wall stages, with Halon Entertainment and ICVR providing content for playback on the LED walls in real-time. Brillhart exposed the filmmakers to a variety of virtual production tools and techniques, including real-time virtual location scouting within 3D environments using Unreal Engine.

“The goal of what we’re doing with each of these environments is to walk away from our shoot days with final visual effects that were captured in-camera,” explains Brillhart. “With our limited schedule, we relied on our Virtual Art Department (VAD) teams to get as close to photo-real imagery as possible, i.e., would the image look convincing through the camera’s lens, instead of to one’s eye? Making evaluations like this during pre-production can help save resources for shots that require more detail.”

COVID-19 Hits

However, just as filming was slated to begin, COVID-19 shutdowns went into effect and delayed production. Crewmembers would be required to comply with COVID safety protocols, and the stage operators at Lux Machina and the VAD artists from Halon Entertainment would need to collaborate from off set. Additionally, director Margo Sawaya would be stuck on the East Coast and would need to telecommunicate. In a profession that necessitates in-person collaboration, could this project get its feet off the ground.

Teradek Wireless Monitoring Connects the Crew

Brillhart and Ciaccio viewed their new predicament as an opportunity.

Brillhart and Ciaccio viewed their new predicament as an opportunity.

“Remote monitoring and workflows were built into our original proposal before the shutdowns happened,” Brillhart recalls. “However, COVID safety became a way to challenge ourselves to push these technologies farther. It was very unique not to have the option to work another way.”

Ciaccio turned to Teradek products to ensure he could stream near real-time camera feeds to everyone involved.

“I knew that we needed something that could live-stream to our on-set crew, our virtual collaborators, to Margo, and to our studio executives as well,” Ciaccio notes. “Of all of the products we researched, Teradek had the lowest latency and was the easiest to integrate–by far–so we knew that there would be almost no delay in communication on any end of the production.”

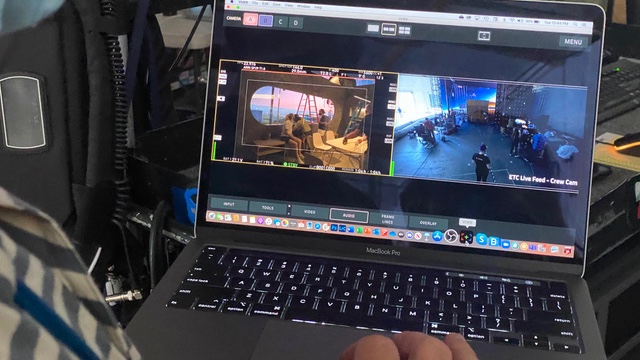

For on-set monitoring, Bolt 3000 wireless transmitters connected ‘A’ and ‘B’ cameras to multiple video villages. Camera feeds and stage views were fed into Cube encoders to stream live feeds over the internet, so collaborators working off-site could monitor filming and understand on-set interaction via Teradek’s VUER and Core apps on their personal smart devices.

“For interactivity with the set, especially with talent, Margo needed minimal latency,” explains Ciaccio. “We set her up with Core on her iPad. She could monitor the ‘through-the-lens’ feed and view the witness cameras to better understand crew interaction between takes. Margo would communicate with Hannah and say, ‘Okay, camera tilt up. Camera pan right. Actor, move left.’ We used MacBook Pros, iPads and iPhones to ensure faithful image reproduction. It worked really well, and the image quality was excellent.”

Creative Possibilities

Was the project worth the effort?

“Absolutely,” Ciaccio responds. “We would have never been able to test out all of these technical workflows, had we not been confronted with the challenge of absolute necessity. Not only did our crew learn new storytelling possibilities with virtual production, but we also discovered new collaborative possibilities through live streaming with Teradek products.”

“Computing, programming, networking, and pipeline development are new ingredients in our ecosystem that are making room for new combinations of skill sets to exist in this art form,” Brillhart adds. “It will be exciting to see how students apply their abilities with these new tools to which they have access early in their careers.”

The Ripple Effect will see festival play sometime in 2021.

Brillhart and Ciaccio will be discussing these emerging technologies on January 7th, and those interested can sign up here.