SIGGRAPH 2017, an annual interdisciplinary educational experience showcasing the latest in computer graphics and interactive techniques, has announced highlights from this year’s Emerging Technologies, Studio, and Real-Time Live! programs. SIGGRAPH 2017 will mark the 44th International Conference and Exhibition on Computer Graphics and Interactive Techniques, and will be held 30 July–3 August 2017 in Los Angeles.

The Emerging Technologies program gives attendees the chance to interact with surprising experiences that move beyond digital tradition, blur the boundaries between art and science, and transform social assumptions. Attendees can see, learn, touch, and experience state-of-the-art projects that explore science, high resolution digital-cinema technologies, and interactive art-science narratives. Watch the preview trailer.

“SIGGRAPH’s annual Emerging Technologies program features installations and presentations that combine the best in art and science in compelling and unique ways. These technologies often have a multitude of creative applications and uses, some of which aren’t always immediately apparent, but often help push their industries forward in incredible ways. As we imagine what our world will look like in 10 years, the work on display at Emerging Technologies this year will, without a doubt, have a large influence over the realities of that future,” said Jeremy Kenisky, SIGGRAPH 2017 emerging technologies chair.

There are 26 Emerging Technologies installations this year, along with 13 experience presentations. Among the highlights of this year’s program are:

MetaLimbs: Multiple Arms Interaction Metamorphism

MetaLimbs proposes a novel approach to body-schema alternation and artificial-limb interaction. It adds two robotic arms to the user’s body and maps the global motion of legs and feet relative to the torso. It also maps local motion of the toes. Then it maps these data to arm and hand motion, and to fingers gripping the artificial limbs, adds force feedback to the feet, and maps the feedback to the manipulator’s touch sensors.

Touch Hologram in Mid-Air

This demonstration adds a method for touching the objects, based on a touch development kit from Ultrahaptics, the only mid-air tactile feedback technology. It provides a touch feeling without any mechanical equipment in the visualization area (which would be inconsistent with the hologram concept). Touch Hologram in Mid-Air is a singular experience that illuminates a broad field of future research. Its aim is giving physical presence to intangible objects.

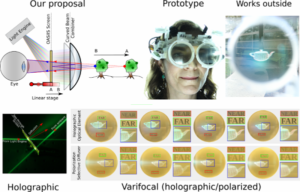

Varifocal Virtuality: A Novel Optical Layout for Near-Eye Display

This project employs a novel wide-FOV optical design that can adjust the focus depth dynamically, tracking the user’s binocular gaze and matching the focus to the vergence. Thus the display is always “in focus” for whatever object the user is looking at, solving the vergence-accommodation conflict. Objects at a different distance, which should not be in focus, are rendered with a sophisticated simulated defocus blur that accounts for the internal optics of the eye.

The theme for this year’s SIGGRAPH 2017 Studio program is “Cyborg Self: Extensions, Adaptions, and Integrations of Technology within the Body.” As the growth of wearable devises continues to expand, humans are developing an ever-expanding toolset of extensions, insertions, and interventions that lead us to question the future of hybridization in our physical evolution. The Studio will present a broad range of concepts related to the convergence of the physical body and evolving technologies with an emphasis on wearables, e-textiles, bio-tech, and sensory extensions across physical and virtual platforms. Watch the preview trailer.

Brittany Ransom, SIGGRAPH 2017 studio chair, said, “Through our program, attendees get to be hands-on with evolving technologies in an age where those technologies change ever so quickly. Attendees can interact with everything from a large format printer to a magnetic levitation installation and even a live giraffe! Our workshops allow attendees to learn a new skill set–they get to make something unique or do something different with their hands that they’ve never done before.”

This year’s Studio program will feature seven installations and eight workshops. Among the highlights are:

LeviFab: Stabilization and Manipulation of Digitally Fabricated Objects for Superconductive Levitation

This study focuses on superconductive levitation which has not yet been well explored for entertainment applications. One promising approach combines computational fabrication and manipulation methods to achieve superconductive levitation and manipulation of 3D printed objects. These methods of levitation have wide applications, not only in entertainment but also in other HCI contexts.

Textile++: Low Cost Textile Interface Using Principle of Resistive Touch Sensing

In recent years, wearable computing has become widespread yet expensive. Textile++, a fiber-based system that can be applied to various fields, confronts these cost challenges. Based on the principle of resistive touch-sensing, Textile++ is comprised of cloth that can be applied directly to conventional clothing through folding and sewing, and can be manufactured at very low cost.

Other highlights within the Studio program include an Epson sponsored t-shirt design competition and a special appearance by “Tiny,” a giraffe, who will be available for two animal drawing sessions with Otis College of Art and Design’s Gary Geraths.

Last but not least, the SIGGRAPH 2017 Real-Time Live! program is a one-night-only event that will showcase the latest trends and techniques for pushing the boundaries of interactive visuals. Watch the preview trailer.

“This year’s Real-Time LIVE! will represent a true spectacle of graphics rendering techniques from the most creative and technologically advanced minds across our industry,” said SIGGRAPH 2017 Real-Time Live! chair Cris Cheng.

Real-Time Live! will feature 10 presentations. Among the highlights are:

The Human Race

The Mill and Epic present The Human Race, an interactive film made possible by real-time VFX, blurring the line between production and post. Powered by UE4, The Mill’s Cyclops production workflow system, and The Mill BLACKBIRD, the film revolutionizes the conventions of digital filmmaking to create a film anyone can play.

Penrose Maestro

Penrose Maestro is a suite of tools that enables artists to review and work on a VR story in a collaborative, multi-user native VR environment. It takes the artists into the production together, allowing for perfect spatial context when taking notes from a director.

Star Wars Battlefront VR: Piloting an XWing for the First Time

Star Wars Battlefront VR was released to critical acclaim in December 2016. This is the first VR game ever created using Frostbite, the same engine that powers games like Battlefield. The production team at Criterion Games faced the challenge of creating a VR game on PS4 with visuals that match modern AAA games.